- Bittensor DAO approved TAO bridges to Arbitrum and Base. Wrapped TAO gains DeFi and governance utility on EVM chains.

- TAO Foundation launched a $100M grant program for decentralized AI projects: data validation, compute markets, zk-inference.

Bittensor (TAO) is trading at approximately $416.23 USD, maintaining a strong position within the AI and decentralized machine learning sector.

With a circulating supply of 33.39 million TAO and a limited tokenomics structure, the asset has become one of the most sought-after for infrastructure-scale applications of decentralized artificial intelligence.

TAO has been consolidating in a broad ascending range, holding firm above the $390 support level and now targeting a move back above $430—a short-term resistance area that could open up a push toward $465–$480 if broken with volume.

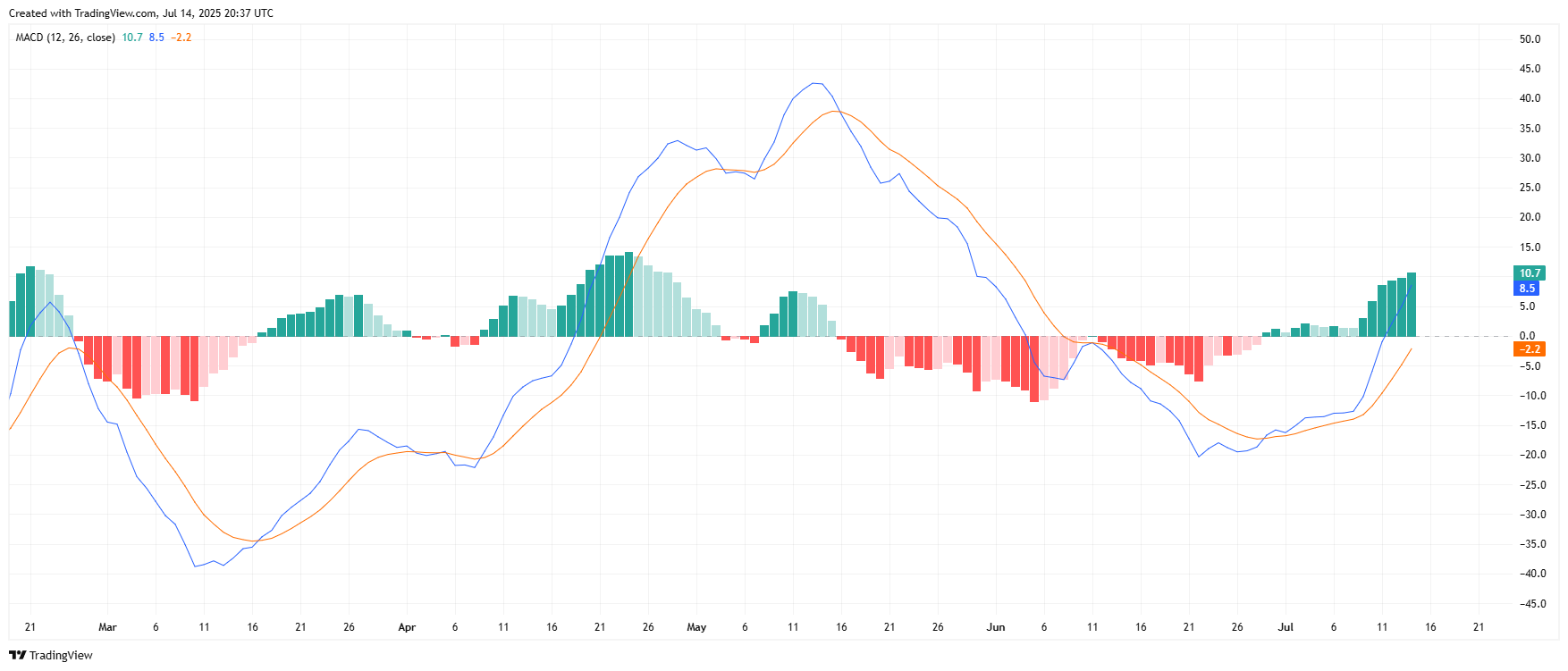

The RSI is currently hovering around 63, indicating continued bullish strength without being significantly overbought. MACD shows a bullish crossover forming again on the 4-hour chart, with price reclaiming its position above the 50-day moving average.

TAO’s structure remains favorable as long as it holds above the $380–$390 range, with long-term support at $350.

A new integration allows OpenAI-compatible inference models to run on Bittensor’s network via Subnet 17, allowing developers to deploy GPT-class agents directly within the decentralized ecosystem. This is a major interoperability milestone for AI-native blockchains.

Bittensor DAO Approves Multichain Bridge and Ethereum L2 Expansion

The community has approved the deployment of TAO token bridges to Arbitrum and Base, enabling wrapped TAO usage in DeFi protocols and governance tools across EVM-compatible chains. Liquidity pools and staking vaults are expected to launch in the coming weeks.

TAO Foundation Announces $100M AI Research Grant Program. The TAO Foundation has unveiled a $100 million ecosystem fund to support decentralized AI projects, targeting teams building data validation networks, compute marketplaces, and zero-knowledge inference models on Bittensor.

Bittensor Achieves 98% Model Uptime on Mission-Critical Inference Subnets. With validator reliability and reward systems maturing, several TAO Subnets have demonstrated uptime rates above 98%, outperforming centralized AI hosting platforms in terms of resilience and decentralization.

Partnership with Nvidia-Powered Decentralized GPU Network

TAO is now partially backed by a decentralized compute network utilizing Nvidia A100 and H100 GPUs, enabling large-scale AI training through token incentives—further decentralizing infrastructure dependency.